Agent evaluation is a control plane (not a dashboard)

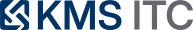

Agentic systems fail in tool-use, memory, and recovery—not just ‘wrong answers’. Treat evaluation like a control plane: traces → metrics → release gates, with scorecards that point to root causes.

KMS ITC

If your system is prompt → answer, evaluation is mostly about output quality.

If your system is an agent, evaluation is about behaviour over time:

- did it choose the right tool?

- did it call the tool correctly (parameters, auth, retries)?

- did it recover from failures without looping or escalating blast radius?

- did it stay inside policy boundaries?

- did it finish within latency and cost budgets?

That’s why “one score” dashboards often fail in production: they tell you the agent is worse, but not why.

The architectural move is to treat evaluation as a control plane.

1) The core pattern: traces → metrics → release gates

In large enterprises, the hard part isn’t writing an evaluator—it’s making evaluation repeatable, auditable, and enforceable across teams.

A practical reference architecture looks like this:

What this enables

- Standard inputs: trace format is consistent (agent runs become replayable artefacts)

- Comparable metrics: teams share a common score vocabulary

- Change control: releases are gated by measurable thresholds (not vibes)

- Ops readiness: production drift becomes detectable early

The uncomfortable tradeoff

Treating evaluation as a control plane introduces platform overhead:

- trace capture/storage costs

- evaluator maintenance

- governance around what “success” means per use case

But the alternative is worse: uncontrolled agent behaviour shipped behind an API.

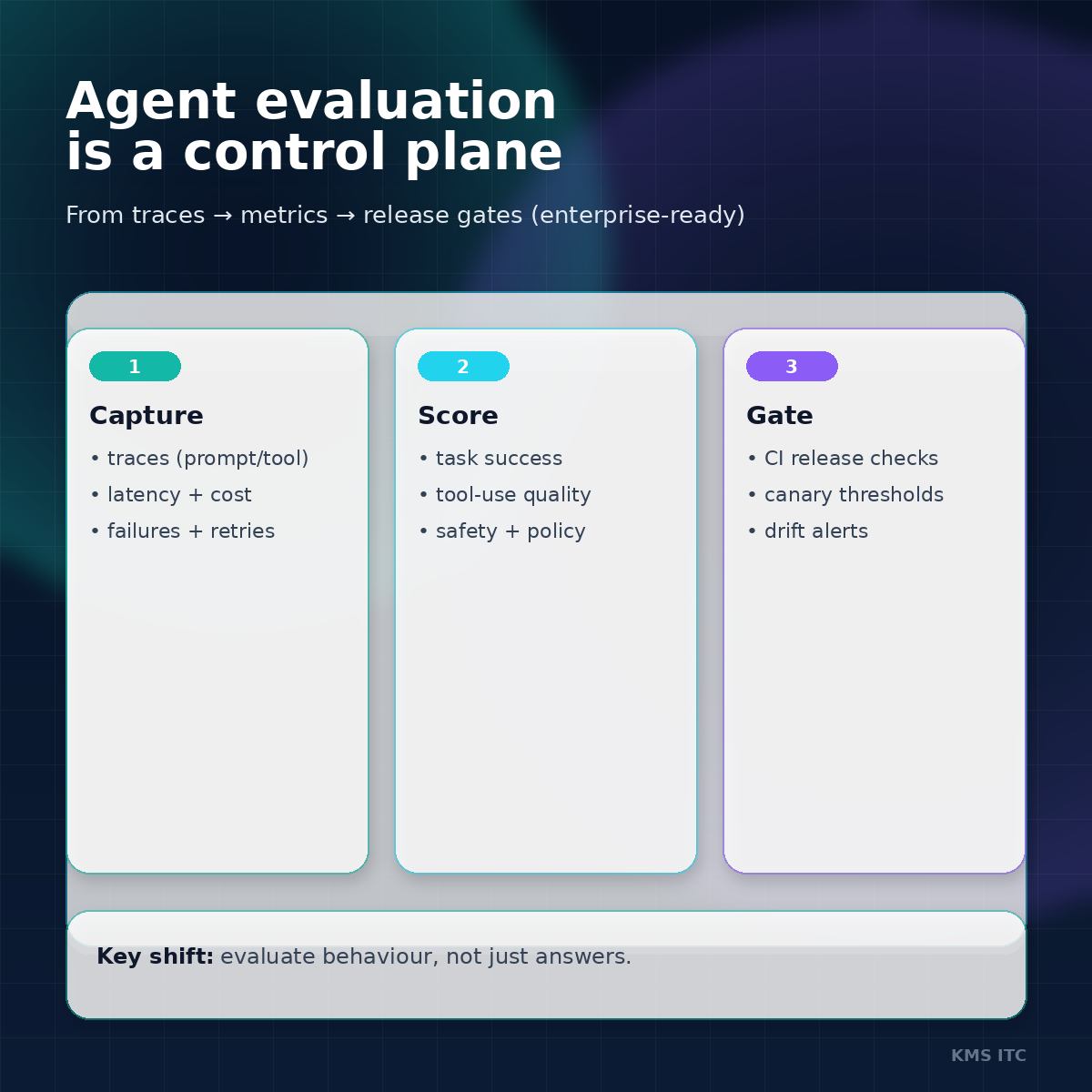

2) Don’t grade only outcomes—grade components

Agents fail “mid-flight”:

- good plan, wrong tool

- right tool, wrong parameters

- correct tool call, auth timeout

- correct retrieval, incorrect synthesis

If you only grade the final answer, these all look the same.

Instead, build scorecards that separate:

- Outcome metrics (did the task complete?)

- Component metrics (where did it break?)

- Model metrics (is this a model choice or a system design issue?)

A useful mental model

If an agent is a distributed system, then:

- traces are your logs

- metrics are your SLOs

- release gates are your change management

3) Enterprise guardrails: define gates that match your blast radius

A control plane becomes valuable when it can stop bad changes.

Start with three gate types:

Gate A — Success

- task success rate on a golden set

- regression threshold (e.g., “no more than -2% vs last release”)

Gate B — Safety / policy

- data boundary violations (PII/secret leakage)

- restricted tool access (e.g., “no write actions in sandbox tier”)

- hallucination-sensitive domains (finance/legal/medical)

Gate C — Budget (latency + cost)

- p95 latency threshold

- token/tool-call budget per task

- “failure loop” detection (retries without progress)

Architectural implication: your platform needs a notion of agent tiers (sandbox → internal → customer-facing → regulated) so gates can tighten as risk increases.

4) What to do next (a 2-week blueprint)

If you’re scaling agents across teams, this is a pragmatic start:

- Instrument traces first (prompt + tool calls + errors + timings + cost)

- Create a golden set (happy path + edge cases + “known bad” prompts)

- Implement component metrics for tool-use and recovery (not just output grading)

- Add CI release gates (success/safety/budget)

- Stand up drift monitoring in prod (alerts + periodic human audit)

If you do only one thing: make every score link back to a replayable trace.

Sources

If you want, I can share a lightweight agent evaluation template (trace schema + scorecard layout + CI gates) that teams can adopt in a week.

Reply with EVAL CONTROL PLANE and tell me your environment (AWS/Azure/GCP, and whether you’re using LangChain/LangGraph/Strands/other) and I’ll tailor it.