AI agents need an evaluation control plane: traces → metrics → release gates

If you can’t measure task success, tool correctness, safety, latency, and cost—at the trace level—you don’t have an AI agent. You have an incident waiting to happen.

KMS ITC

AI agents are not “chatbots with tools”. Architecturally, they’re distributed systems:

- multiple calls (model + memory + tools)

- multiple failure modes (planning mistakes, schema drift, auth failures, tool timeouts)

- emergent behaviour (the same prompt can produce different action sequences)

If you ship agents without a repeatable evaluation loop, you’ll discover quality, safety, and cost issues the worst way: in production.

AWS’ recent work on agent evaluation (AgentCore Evaluations + a trace-driven workflow) is a good signal of where the industry is going: evaluation becomes a platform capability, not an ad-hoc spreadsheet per team.

1) What changed (and why it matters)

Single-model benchmarks helped when the app was “prompt → answer”. Agents changed the game because success isn’t just what the agent said — it’s what it did:

- Did it choose the right tool?

- Did it pass correct parameters?

- Did it recover when a tool failed?

- Did it stay within policy boundaries?

- Did it complete the goal within an acceptable cost/latency budget?

This is why trace-based evaluation is becoming the default: you need to score the whole execution, not just the final text.

2) The enterprise architecture implication: eval is a control plane

Treat evaluation like an enterprise control plane, similar to:

- API gateways (policy + observability)

- container registries (trusted artefacts + promotion)

- CI/CD (repeatable release gates)

In practice this means:

- Product teams build agent use cases.

- A shared evaluation service provides trace capture, standard metrics, dashboards, and release gating.

- EA/security define the minimum gate set (and the exceptions process).

The win is speed and safety: teams iterate faster because they’re not reinventing the measurement stack.

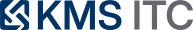

3) A practical agent evaluation architecture

A workable architecture has four moving parts:

-

Trace capture (offline and online)

Capture prompts, responses, tool calls, tool parameters, timing, errors, and cost signals. -

Eval sets

Maintain a “golden set” (critical tasks) plus edge cases and adversarial prompts (including prompt injection attempts). -

Evaluation service

Score task-level success plus component-level behaviours: tool selection accuracy, parameter accuracy, memory retrieval quality, safety and refusal behaviour. -

Release gates + monitoring

Gate agent changes in CI/CD, then watch for drift/decay in production with alerts + rollback playbooks.

4) The hidden failure mode: tool onboarding is architecture (not plumbing)

One of the most underappreciated agent problems is tool onboarding at scale.

When an enterprise agent has hundreds of tools available, weak tool definitions create predictable pathologies:

- wrong tool selection (“it called the shipping API instead of inventory”)

- malformed parameters (schema mismatch)

- redundant tool calls that inflate context and cost

- slow multi-step plans that blow latency budgets

The architectural response is to standardise tool schemas, descriptions, and contracts across teams — and then evaluate tool-use with regression tests (golden traces) the same way you treat APIs and SDKs.

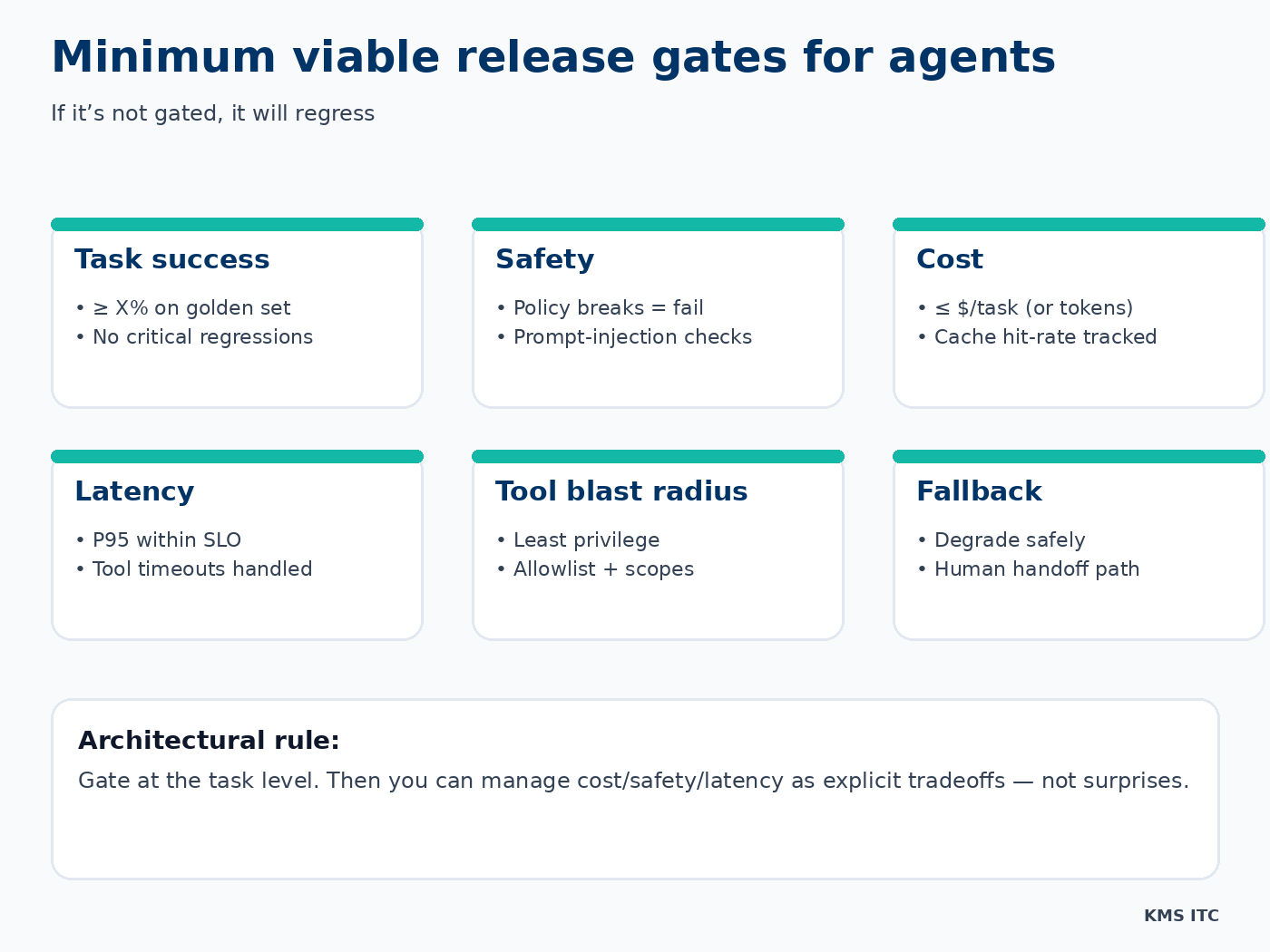

5) Minimum viable release gates (CTO-level)

If you only implement one thing: implement task-level success gating.

Everything else becomes manageable when you can say:

- “this change improves success by +4% on our golden set”

- “it costs +12% more tokens per task”

- “it increases tool-call errors in scenario X”

That’s a tradeoff discussion. Without that data, it’s faith.

6) What to do next (a 10-point checklist)

Use this as a lightweight starting point:

- Define the agent’s task contract (goals, users, constraints)

- Instrument trace capture (prompts, tool calls, errors, latency, cost)

- Create a golden set of critical tasks (10–50 is enough to start)

- Add edge-case and adversarial scenarios (incl. prompt injection)

- Pick a small set of standard metrics (success, safety, cost, latency)

- Add component metrics (tool selection/params, memory retrieval quality)

- Implement CI gates (block release on regression thresholds)

- Add budget guardrails (token/$ per task ceilings)

- Run periodic HITL audits (sample traces + eval outputs)

- Set up prod decay alerts + rollback playbooks

7) Closing: the question to ask before you scale agents

Before you scale agent adoption across teams, ask:

“Do we have an evaluation control plane, or are we hoping?”

If you want, I can help you:

- define a minimal evaluation standard (golden set + metrics + gates)

- design the trace/eval architecture (tool contracts, dashboards, CI integration)

- build a paved-road agent platform so teams ship safely and quickly

Reach out via /contact and tell me your top 2 agent use cases and what failure you’re most worried about (safety, cost, or reliability).