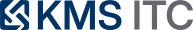

GKE Inference Gateway: the missing control point for production LLM serving

If your LLM platform is still using generic load balancing, you’re leaving latency, cost, and reliability on the table. Inference-aware routing (queue + KV cache + admission control) turns GPU serving from an app problem into a platform capability.

KMS ITC

Most enterprise LLM stacks hit the same wall when they move from demo → production:

- Latency becomes non-deterministic (TTFT is fine… until it isn’t).

- p95 blows out under bursts (retry storms, queue collapse, noisy neighbour).

- Cost-per-token drifts upward (poor cache affinity = expensive re-computation).

This is not a “prompt quality” issue.

It’s an inference traffic-shaping issue—and generic load balancers were never designed for it.

1) Why LLM serving breaks “normal” load balancing assumptions

Traditional L7 load balancing is mostly blind to the two things that dominate LLM inference performance:

- Queueing dynamics (bursty arrivals + long tail service times)

- KV cache locality (your context is effectively a “warm state” you want to reuse)

So round-robin (or least-connection) will often do the wrong thing:

- It sends a request to an “idle” replica that has zero relevant prefix cached.

- That forces expensive re-processing of long prompts.

- Then it creates backpressure and pushes tail latency into user-visible timeouts.

In other words: you’re optimising for fairness, but LLM serving needs efficiency + protection.

2) The platform move: put inference-aware routing in front of the model servers

Google’s Vertex AI team describes adopting GKE Inference Gateway (built on the Kubernetes Gateway API) and seeing meaningful improvements in production:

- TTFT improvements (reported >35% faster responses in one workload)

- Tail latency improvements (reported ~2× better p95 in a bursty chat workload)

- Prefix cache hit rate uplift (reported doubling hit rate in their setup)

Those numbers are interesting.

But the architecture implication is bigger:

You can move “inference scheduling” out of application code and into a reusable platform layer.

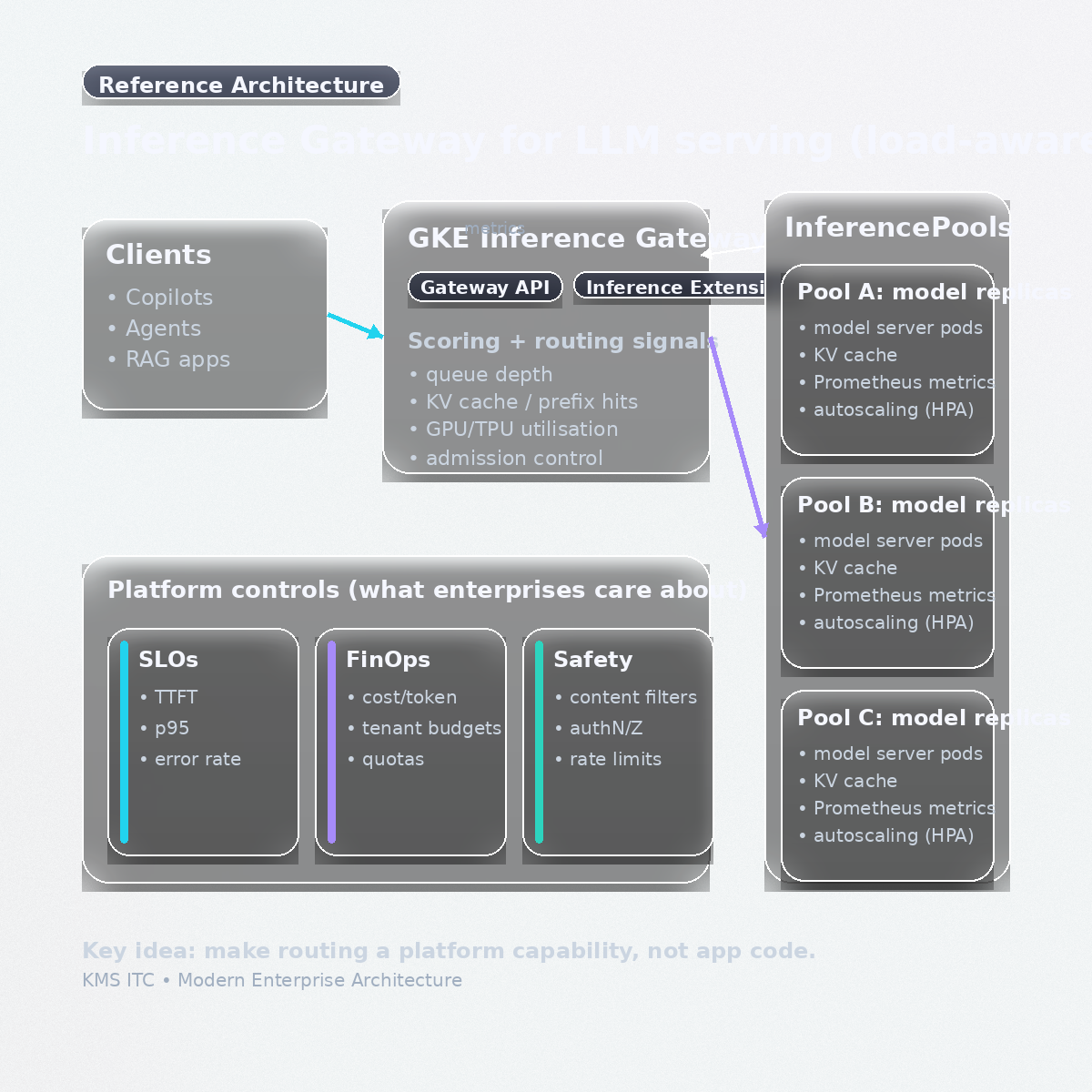

3) Reference architecture (CTO/EA view): routing is now a first-class capability

What changes when you introduce an inference gateway:

- Routing becomes policy-driven: choose replicas based on queue depth + cache affinity + utilisation (not just “available endpoints”).

- Bursts are handled upstream: admission control at ingress prevents pod-level saturation from cascading.

- Observability becomes actionable: the gateway is the right choke point to tag requests (tenant, model, workload class) and enforce SLOs/quotas.

This is the same “enterprise pattern” we’ve used for years with API gateways—now applied to LLM inference.

4) The two enterprise tradeoffs you need to decide (explicitly)

Tradeoff A — Cache affinity vs fairness

Content-aware routing improves efficiency, but it can create “hot replicas”.

What to standardise:

- A multi-objective score (cache hit potential and queue depth)

- A “hot node escape hatch” (prioritise queue depth when saturation begins)

If you don’t standardise this, teams will reinvent it (badly) per application.

Tradeoff B — Protecting p95 vs maximising throughput

For bursty chat traffic, you usually want predictable p95 even if you sacrifice some max throughput.

What to standardise:

- Admission control (queue caps + backpressure + retry-after)

- “Degradation tiers” (smaller model, shorter outputs, cached answers)

- Separate SLO classes (interactive vs batch)

5) What to do next: a practical rollout sequence (enterprise-safe)

If you’re a platform lead, here’s the order that tends to work:

- Instrument current inference (TTFT/p95, queue depth, tokens in/out, cache hit rate if available)

- Classify workloads (interactive chat, agentic long-context, batch summarisation)

- Introduce the gateway as the control point (routing + admission control)

- Make routing policy owned by platform (weights, objectives, defaults)

- Add governance hooks (tenant quotas, budget envelopes, model allowlists)

- Run “burst drills” (synthetic spikes + measure p95 + verify backpressure)

The takeaway

Production LLM serving is less like “web traffic” and more like stateful, cache-sensitive scheduling on scarce accelerators.

Inference-aware gateways are the pattern shift:

- SLOs: defend TTFT and p95 under burst

- FinOps: improve cache efficiency → lower cost-per-token

- Operations: stop building bespoke schedulers per team

Sources

- Google Cloud Blog: GKE Inference Gateway improved latency for Vertex AI

- Google Cloud Docs: About GKE Inference Gateway

If you’re building an internal LLM platform, I can share a starter routing policy (workload classes, scoring weights, admission control defaults, and rollout guardrails).

Comment “INFERENCE GATEWAY” and tell me whether you’re optimising for chat, RAG, or agents—and what your current p95 looks like under burst.