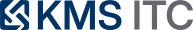

Provisioned Throughput for LLMs: the missing layer between SLOs and FinOps

On-demand LLM inference is great for experiments—and brutal for production SLOs. Provisioned throughput turns capacity into an architectural primitive: predictable latency, deliberate burst handling, and a budget you can actually govern.

KMS ITC

If you’re building internal copilots or agentic workflows, you’ve already felt the tension:

- Product wants fast responses and no waiting.

- Finance wants predictable spend.

- Platform wants reliability (and fewer 2am incidents).

On-demand LLM inference is fantastic for prototypes—but in production it behaves like an “infinite variable cost service” with latency variance and capacity uncertainty.

Provisioned throughput changes the game: it makes inference capacity something you engineer—not something you hope scales.

1) The architectural problem: SLOs don’t survive “best effort capacity”

When demand spikes (launches, payroll week, quarter-end, incident floods), a typical LLM stack fails in predictable ways:

- Queue growth → timeouts → retries → amplified load.

- p95 latency balloons, even if average looks “fine”.

- Teams add ad-hoc workarounds: caching here, bigger limits there, “just increase the budget”.

The root cause isn’t your prompt.

It’s that you’re running an SLO-bound workload on opportunistic capacity.

2) Provisioned throughput: capacity as a first-class platform primitive

Provisioned throughput is essentially a commitment model for inference:

- You reserve a baseline of capacity for steady traffic.

- You design explicit handling for burst traffic (overflow → on-demand, degrade paths, or queue caps).

In enterprise terms, you’re moving inference from:

- “shared public lane”

to

- “reserved lane + controlled overflow”

That unlocks real platform behaviors:

- Latency targets you can defend (because capacity is not accidental).

- FinOps you can govern (because there is a defined base load).

- Change management (because scaling is an intentional decision, not a panic reaction).

3) The key design move: separate base load from burst (and treat them differently)

Most enterprise GenAI traffic is a mix of:

- Base load: steady internal usage (helpdesk copilot, dev assistant, search augmentation).

- Burst: events (all-hands Q&A, outage triage, marketing launches, regulatory deadlines).

If you treat them the same, you’ll overpay for “always-on peak”, or you’ll underserve users when it matters.

A better pattern:

- Put the base load on provisioned capacity.

- Route burst into a controlled overflow path.

- Apply guardrails that preserve system health and budget.

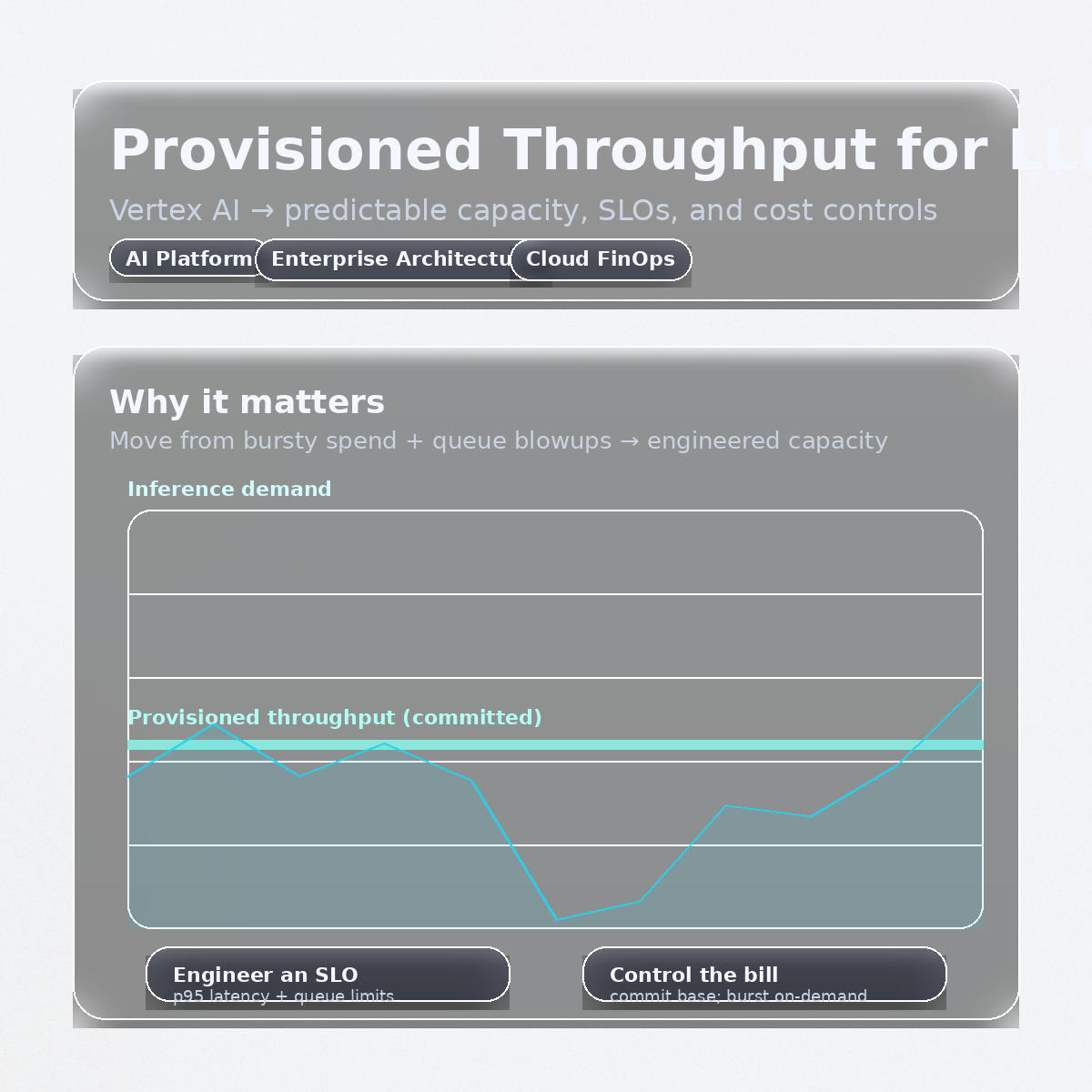

4) Reference architecture: LLM capacity routing (the CTO-friendly version)

The architecture shift isn’t “turn on a feature”. It’s introducing a platform layer that enforces policy and SLOs.

What’s new here is the gateway. It becomes the control plane for:

- Routing: provisioned-first, then overflow.

- Guardrails: queue caps, timeouts, circuit breakers.

- Governance: tenant-level policy, budget enforcement, and prompt/tool versioning.

- Observability: trace IDs + request tagging so you can answer “what did this cost and why?”

5) The “three knobs” you must standardise (or you’ll just shift the pain)

Knob A — Admission control (protect reliability)

Define what happens when you’re saturated:

- Hard queue limits per tenant/workload

- Explicit timeouts by use case (chat vs batch)

- Backpressure (429 + retry-after) instead of silent queuing

Knob B — Degradation paths (protect user experience)

Design fallbacks deliberately:

- Smaller/cheaper model for overflow

- Shorter max output tokens

- Turn off tools/function-calls for overflow tier

- Serve cached answers for common intents

Knob C — Cost envelopes (protect the business)

Make cost a platform constraint, not a monthly surprise:

- Budget ceilings by tenant and environment

- Default quotas for new apps (sandbox → production promotion)

- “Spend per successful outcome” tracking (tickets resolved, code merged, time saved)

6) What to do next: an enterprise rollout checklist

If you’re a CTO / EA / platform lead, here’s the pragmatic sequence:

- Classify workloads into tiers (internal, customer-facing, regulated) with explicit SLO + budget targets.

- Instrument the gateway (request IDs, tenant tags, tokens-in/out, model, tool use, latency).

- Estimate base load from real traffic (not forecasts): steady QPS + typical token volume.

- Provision capacity for base load; route overflow to on-demand.

- Define saturation behavior (queue caps + timeouts + 429 strategy) and test it.

- Add safe degradation (smaller model, capped output, cached responses).

- Turn on governance (budgets, quotas, and a promotion process from sandbox → production).

The takeaway

Provisioned throughput isn’t just a pricing detail—it’s an architecture lever.

It lets you align three things that usually fight each other:

- SLOs (predictable latency)

- Platform engineering (operational control)

- FinOps (predictable spend)

Sources

If you want, I can share a one-page capacity routing policy template (tiers, overflow rules, degradation paths, and budget envelopes) you can drop into your architecture standards.

Comment “CAPACITY POLICY” and tell me your cloud (AWS/Azure/GCP) + whether your primary workloads are chat, RAG, or agents.