GenAI architecture needs a review loop: operationalising the Well-Architected GenAI Lens

The fastest way to scale GenAI safely is to turn governance into a repeatable architecture review loop—with reference patterns, measurable guardrails, and a funded backlog.

KMS ITC

Most enterprise GenAI programs don’t fail because the model is “bad”. They fail because decisions are implicit:

- what data is allowed (and why)

- what “good” output looks like (and how it’s measured)

- who owns cost and reliability

- how agents are kept inside guardrails

The updated AWS Well-Architected Generative AI Lens is useful because it pushes those decisions into a reviewable architecture—not a slide deck.

1) What changed (and why it matters)

AWS’ update calls out three areas that are easy to underestimate until you get burned:

- Responsible AI as “required reading” (not an afterthought)

- Data architecture preamble (GenAI is only as good as your data plumbing)

- Agentic workflows preamble (tool use + orchestration changes your risk profile)

This isn’t just documentation hygiene. It’s a signal that GenAI has crossed into “normal architecture” territory: repeatable patterns, explicit tradeoffs, and operational posture.

2) The enterprise architecture implication: treat GenAI as a platform capability

If every team “just builds a chatbot”, you don’t get innovation—you get:

- duplicated retrieval pipelines

- inconsistent safety controls

- cost explosions (token + vector + egress)

- unowned reliability (who gets paged when the model/API degrades?)

The better mental model is:

- Product teams build use cases.

- A GenAI platform provides paved roads: identity, data access patterns, evaluation harnesses, policy enforcement, observability, and cost controls.

- EA/security/legal shape the control catalogue (what must be true for a use case to ship).

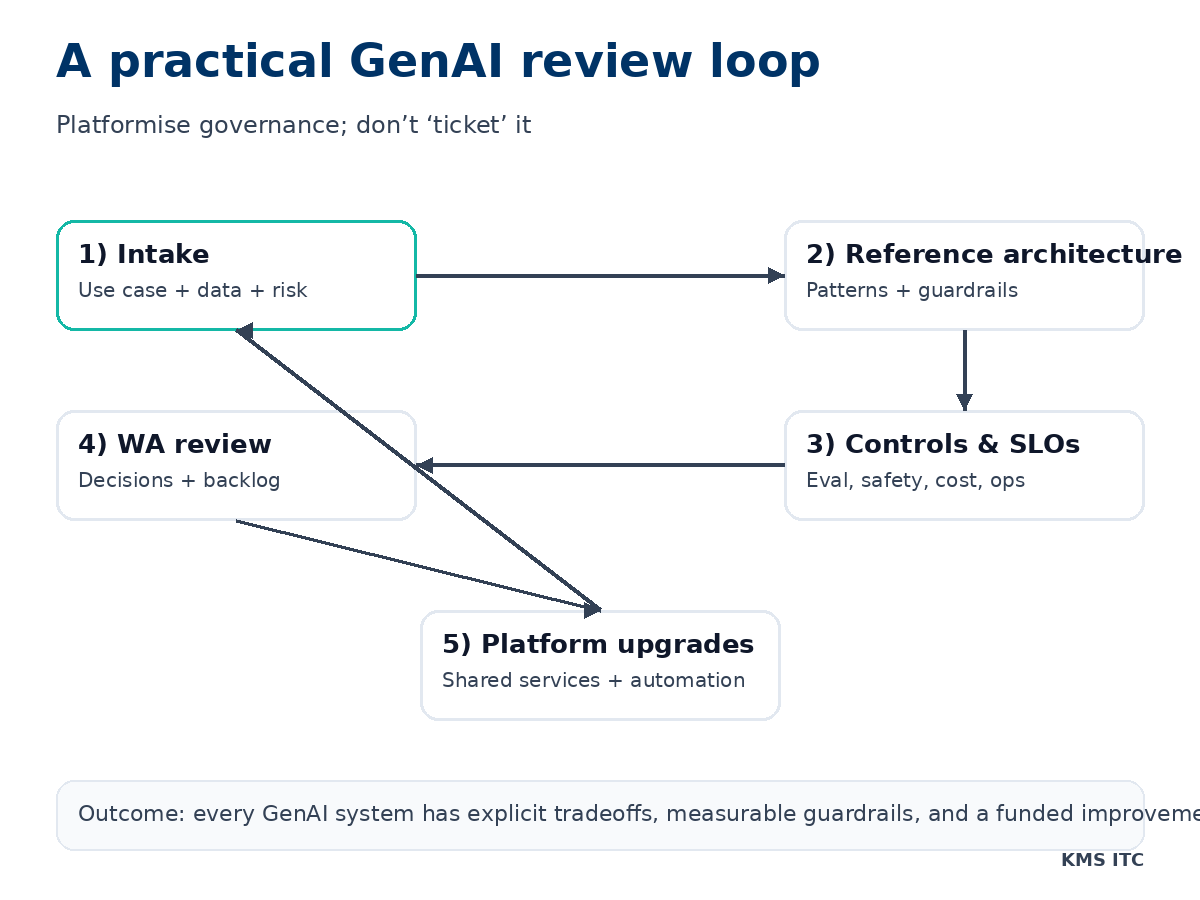

3) A practical GenAI review loop (what to implement)

A good GenAI review loop produces artefacts you can reuse:

- a reference architecture (RAG, tool use, agent orchestration, multi-tenant)

- a control catalogue (data classification, retention, audit, red-teaming, model risk)

- an evaluation standard (task success, refusal quality, hallucination rate, latency, cost)

- an operational SLO set (availability targets, fallback modes, incident playbooks)

The big shift: governance becomes automation + shared services, not “more approvals”.

4) The tradeoffs to force into the open (CTO-level)

When you run a Well-Architected-style review, insist on explicit answers to:

- Build vs buy: Are you standardising on a managed model endpoint, a model gateway, or self-hosting? What’s the exit plan?

- Data boundary: What data can be retrieved? What data is forbidden? What’s the de-identification story?

- Eval maturity: Are you measuring quality continuously, or only demoing “happy paths”?

- Agent safety: What tools can the agent call? With what permissions? What is the blast radius of a prompt injection?

- Cost ownership: Who pays for tokens, embeddings, vector storage, and re-indexing—and what’s the budget guardrail?

5) A 10-point “ship it” checklist

Use this as a minimum bar for production GenAI:

- A defined use case contract (users, tasks, success metrics)

- Data sources are classified, approved, and access-controlled

- A documented prompt/tool policy (what the system is allowed to do)

- Offline evaluation set + regression tests

- Online monitoring: latency, cost, refusal rate, safety signals

- Clear fallback modes (degraded answers, human handoff, cached responses)

- Secrets + tool credentials handled with least privilege

- Logging and audit trail aligned to compliance needs

- Change management for prompts, tools, indexes, and policies

- A funded backlog of platform improvements from the last review

6) What to do next

If you’re scaling GenAI across multiple teams this quarter, don’t start by arguing about models. Start by implementing the review loop and shipping a paved-road platform.

If you want, I can help you:

- design a GenAI reference architecture for your org (RAG + agents)

- define a control catalogue and evaluation standard

- set up a repeatable Well-Architected-style review cadence

Reach out via /contact and tell me your top 2 GenAI use cases and constraints.