World models and the next generation of AI: from chat to simulation, planning, and control

The next capability jump won’t come from bigger prompts. It’ll come from agents that can simulate futures, plan under uncertainty, and verify outcomes—using world models as an internal control plane.

KMS ITC

LLMs changed software by making language executable.

The next generation of AI changes software again by making the future executable.

That sounds dramatic, but it’s an accurate way to think about world models: internal simulators that let an agent ask, “If I do X, what happens next?”—and answer it cheaply, repeatedly, and with measurable uncertainty.

When you combine world models with tool-using agents, you get systems that don’t just respond. They predict, plan, and verify before acting.

1) What is a world model (engineering definition)

A world model is a learned representation of an environment that supports:

- State construction: compressing messy observations (text, images, logs, sensor feeds, UI) into a stable internal state.

- Transition prediction: forecasting how that state changes—often conditional on actions.

- Counterfactual reasoning: comparing “what if we do A?” vs “what if we do B?” before committing.

World models are often described as having two primary functions: understanding the present and predicting the future.

This matters because “agent failures” in the real world are usually failures of state and dynamics, not failures of language:

- the agent misses a constraint

- the environment changes (UIs, APIs, policies)

- small mistakes compound across a long plan

- the system can’t tell when it’s uncertain

2) Why world models are the next capability jump

Today’s LLM agents are impressive but fragile because they tend to operate like this:

Plan in text → execute tools → hope it works.

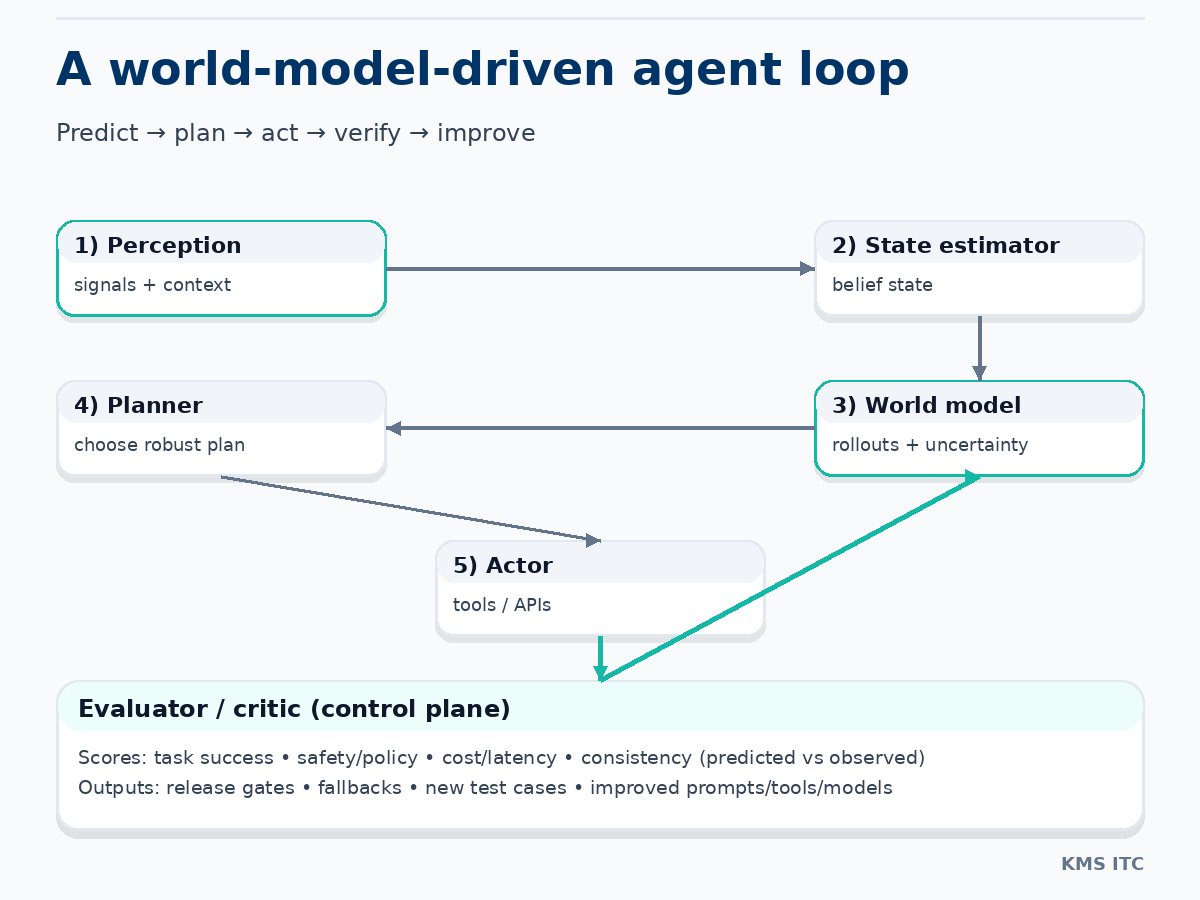

A world-model-driven agent operates more like this:

Build state → simulate futures → select a plan → execute → compare outcome vs prediction → learn/gate.

That shift gives you four practical advantages:

-

Imagination instead of trial-and-error

Try 10 candidate plans in simulation before you spend money, break prod, or annoy a customer. -

Planning with uncertainty

If the model is unsure, the agent can pick conservative actions, ask for clarification, or switch strategies. -

Long-horizon reliability

Rollouts expose where “step 7” fails, even if steps 1–6 look fine. -

Evaluation becomes a control plane

The system can measure predicted vs observed outcomes and enforce release gates.

3) The landscape: four families of world models

World models show up across AI as a spectrum from action-coupled control to general-purpose prediction.

A) Latent dynamics (model-based RL)

These learn compact hidden state and a transition model (often action-conditional), then plan by “imagining” rollouts.

- Great for: robotics/control, efficiency, explicit planning

- Hard parts: distribution shift, compounding error in long rollouts

B) Video / multimodal generative models

These predict rich future observations (frames/tokens/latents) and capture broad priors about the world.

- Great for: generality, high-fidelity prediction, multimodal grounding

- Hard parts: pixel fidelity ≠ physical consistency; controllability and evaluation

C) Implicit world modeling inside LLM/VLM agents

LLMs contain a lot of “world knowledge” and can reason about outcomes in language—but it’s often uncalibrated.

- Great for: abstraction, constraints, explanation, tool selection

- Hard parts: hallucinated dynamics, weak grounding, uncertainty calibration

D) Hybrid stacks (the enterprise path)

In practice, the winning systems are hybrid:

- LLM for goals, decomposition, tool use, explanation

- domain-specific simulators for predictable subproblems (UI flows, code changes, logistics)

- evaluators to grade rollouts and gate actions

4) A concrete next-gen agent architecture

A pragmatic reference architecture looks like this:

- Perception: parse inputs (VLM/LLM extract entities, constraints, context)

- State estimator: maintain a belief state (objects, relations, policies, budgets)

- World model: predict transitions + uncertainty; run counterfactual rollouts

- Planner: choose a policy/plan under constraints

- Actor: execute tools/APIs/robot actions

- Evaluator/critic: compare observed outcome vs predicted; score quality/safety/cost

- Memory: store trajectories, failures, and successful policies

The evaluator is the keystone. Without evaluation, world models are just expensive imagination.

5) What changes in enterprise AI (the “control plane” view)

If you’re building enterprise agents, the most important reframe is:

World models are not a feature. They’re infrastructure.

Treat them like any other platform component:

- versioned model + dataset + eval suite

- monitored drift and failure modes

- gated releases (“no merge unless eval improves”)

- safe fallbacks when uncertainty spikes

This is how “agentic workflows” stop being demos and start becoming systems you can operate.

The three evaluation layers you actually need

-

Local predictive accuracy

Given state S and action A, was S’ predicted correctly? -

Global consistency over time

Does a 20-step rollout stay coherent, or does error accumulate? -

Task success under constraints

Did we hit the goal while respecting policy, safety, latency, and budget?

In embodied settings, surveys repeatedly call out long-horizon consistency, error accumulation, and the need for evaluation beyond “looks right” as core open challenges.

6) Where the next 12–24 months are heading (high-confidence bets)

1) Agents will ship with “imagination budgets”

Fast, cheap rollouts for exploration; slower, higher-confidence checks for final actions.

2) Ensemble world models become normal

Different models for different regimes:

- coarse predictor for search

- high-fidelity predictor for final validation

- symbolic constraint checker for hard rules

3) State becomes the interface

Enterprises will standardise around:

- structured state schemas

- action schemas

- evaluation schemas

This reduces prompt spaghetti and makes reliability engineering possible.

4) Training data shifts from static corpora → trajectories

The most valuable data is agent interaction:

- tool-call traces

- environment transitions

- failure cases

- evaluation labels

7) The real open problems (what to investigate next)

If you’re doing serious work here, focus on:

- Long-horizon temporal consistency (reducing drift)

- Uncertainty calibration (knowing when you don’t know)

- Causal + physical consistency metrics (not just pixel similarity)

- Action grounding and reversibility (minimising blast radius)

- Unified benchmarks across domains and modalities

Final takeaway

The next generation of AI will feel less like a chatbot and more like a closed-loop system: it predicts, plans, acts, measures, and improves.

World models are the missing piece that makes that loop scalable.

If you’re building agents in 2026, don’t just ask “what model should we use?”

Ask:

- What is our state representation?

- What outcomes can we simulate?

- What do we measure continuously?

- What do we refuse to do when uncertain?

That’s how you turn agent hype into operational capability.

Sources

- Ding et al., Understanding World or Predicting Future? A Comprehensive Survey of World Models (arXiv:2411.14499) — https://arxiv.org/abs/2411.14499

- Li et al., A Comprehensive Survey on World Models for Embodied AI (arXiv:2510.16732) — https://arxiv.org/abs/2510.16732

- Ha & Schmidhuber, World Models (2018) — https://arxiv.org/abs/1803.10122